A couple of weeks back, I had received a question around backing up and restoring the Embedded vPostgres Database found within the new vCenter Server Appliance (VCSA) 6.0. At the time, the only thing I had seen was KB 2110294 and vSphere 6.0 Documentation here which recommends that a full VM backup be taken for either the vCenter Server for Windows as well as the VCSA to be able to properly protect your vCenter Server.

It was just recently that I came across VMware KB 2091961 which provides some details on just backing up the individual vPostgres DB. Having said that, just having a database backup is not sufficient to perform a proper restore in the case of completely losing your vCenter Server. There are other sources of data within the vCenter Server as well as the Platform Services Controller that are required and restoring a database would only work if you still had access to the original system. This is why a full VM backup is still the recommended approach.

For those who want to be able to just restore the database, the process listed in the KB is currently a manual step which uses a Python script that is provided in the KB. I thought it would be useful to demonstrate how you could schedule continuous backups during off peak hours using a simple cronjob and more interesting to me, is the how and where of the overall process? One option would be to mount a backup NFS share directly onto the VCSA and place all backups on that volume. Another option could have the backups directly uploaded to a Storage Cloud Provider like an Amazon S3 for example. I decided to take a look into the latter option.

In searching online, I found that Amazon offers a nice CLI called AWS CLI which provides S3 functionality like the 'cp' command and I was able to install it on the VCSA without any issues. You can find the instructions for installing the AWS CLI here and I would also recommend that you create a dedicate user assigned to the S3 bucket for storing the backups and then following the steps here to configure access to the AWS CLI. When asked about the Amazon Region as part of the configuration, I found this page to be helpful in listing the region names.

Disclaimer: Installing 3rd Party tools and products on the (VCSA) is not officially supported, you may be asked by GSS to remove them during troubleshooting.

If everything is installed correct, you should be able to run the following command to ensure you can reach the S3 bucket:

aws s3 ls s3:\\[NAME-OF-YOUR-S3-BUCKET]

To tie everything together, I created a simple shell script called backup_vcsa_vpostgres_db.sh which contains a couple of variables that you will need to edit:

- VPOSTGRES_BACKUP_SCRIPT - The path to the Python vPostgres backup script

- AWS_CLI - The full path to the AWS CLI binary

- AWS_S3_BUCKET - The name of the S3 bucket using syntax s3:\\NAME-OF-YOUR-S3-BUCKET

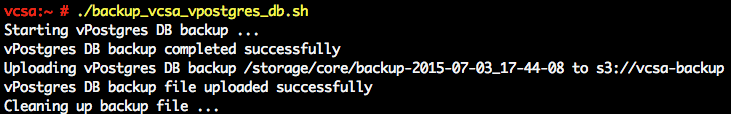

Before creating the cronjob, I would recommend that you manually run the script to ensure everything works as expected and you are able to upload to your S3 bucket. Here is an example execution of the script which is backing up to my S3 bucket which I called "vcsa-backup".

You can quickly verify that the backup has been uploaded to the S3 bucket by running the "ls" command as shown earlier or you can login to the Amazon S3 console and you should be able to see the backup files as shown in the screenshot below.

To schedule the script to automatically run during a certain period, you can create a cronjob by running the following command:

crontab -e

For more information about setting up a cronjob, you can take a look here or Google your favorite resource. If you plan on storing backups with a Cloud Storage Provider and do not have direct internet access like most customers do, you can configure an HTTP(S) proxy by editing /etc/sysconfig/proxy If you prefer not to install AWS CLI, you can also use this simple bash script which uses an HTTP POST to upload to Amazon S3.

Is the script backup_lin.py or something similar also available for the appliance on 5.5?

I currently have a daily cron job setup to kick off sh script to invoke the backup_lin.py script. Any thoughts on how I could get it to bounce an email off my internal mail relay if it errors?

Hi William,

Question about log files (specifically Postgress) on the VCSA. (You recently helped me convert my windows based install to VCSA with the fling tool, thanks again you rock!). My Linux knowledge is limited in scope, and don't have any experience with Postgres. We are doing a full vm level back up of the VCSA using commvault. So in a SQL world we have transaction logs truncated after a backup, log file drive size keeps in check. but with a vm level backup I assume this is not the case. What steps can we take to ensure log files don't grow out of control and having to constantly expand the vmdk? Is there a circular logging equivalent option for Postgress? Currently running v 5.5u3 but am planning on upgrading to V6u1.

Thanks again for your guidance.

Nelson

Do you think this would be possible using Glacier instead of S3?

Will this still work for VCSA 6.5? It appears the new backup feature is not automated.

Hi William

As always this Blog is also good.

I have couple of questions regarding the External PSC and VCSA Backup

1. VMware KB 2091961 talks about Embedded VCSA and PSC,can we follow the same approach for external PSC`s and VC`s.

2. Is Image level full backup is a good approach for PSC and VCSA appliances back up and restore.Please advise, Thanks.